I am sharing here the yearly paper analysis for 2021, containing statistics about ML and NLP publications from the past year. It has arrived later than in previous years - preparing it this time took quite a bit longer than intended. The new data required some manual cleaning and updating of the pipeline, which meant the analysis got delayed quite a bit. But finally, here it is now. 🙂

The analysis of the papers is done using a series of automated tools. These processes are not perfect so some noise and errors may occur. Some authors have also recently started releasing their papers in an obfuscated form for some reason, preventing any copying or automated extraction of the content, so these have had to be excluded from some of the analysis. But overall, each year the pipeline gets a bit better and the bugs from previous years get fixed, so it should provide a good picture of the field.

Many thanks to Chen Cecilia Liu and Jonas Pfeiffer for their help with matching organizations to countries!

This post isn't meant to glorify publishing huge amounts of papers. Quality is definitely more important than quantity. In fact, I think our field has gotten too focused on publishing rapidly in large quantities, which tends to give an advantage to quick iterative papers over thorough groundbreaking ideas. The aim of this analysis is just to provide a bit of a higher-level view of what is happening in the field, which institutions are currently the major players and which researchers have the largest groups.

Venues

Let's start by looking at the conferences themselves. The publication numbers for most of the conferences again kept going up and breaking new records. One exception seems to be ACL - this is likely due to a heavier use of the Findings format, which I didn't include in these statistics. AAAI seems to be almost levelling out, whereas NeurIPS still keeps a steady growth rate. COLING and AACL did not take place in 2021, but both EACL and NAACL did.

Organizations

The organization with most published papers in 2021 (by a wide margin) was Google. Microsoft also manages to offer some competition in that space, with CMU, Stanford, Facebook and MIT ranking after that. Microsoft, CAS, Amazon, Tencent, Cambridge, Washington and Alibaba stand out as having quite a large proportion of papers at NLP conferences, whereas the other top organizations seem to focus mostly on ML venues.

Looking at the statistics for the whole period of 2012-2021, Google with 2170 papers has finally overtaken Microsoft with 2013 papers. CMU with 1881 publications is also represented in the top 3 cluster.

Most of the organizations have also continued to increase their yearly publication count. Google has finally broken their linear acceleration in publication numbers, but still released more papers than ever before. CMU had a plateau last year but has made up for it this year. IBM seems to be the only top organization with a slight downward trajectory, likely related to them selling large parts of Watson recently.

Authors

Next, let's look at the researchers who published the most papers in 2021. Sergey Levine (Berkeley, Google) towers above everyone else with 42 papers. Tie-Yan Liu (Microsoft), Jie Zhou (Tsinghua), Mohit Bansal (UNC) and Graham Neubig (CMU) are also among the top publishing researchers.

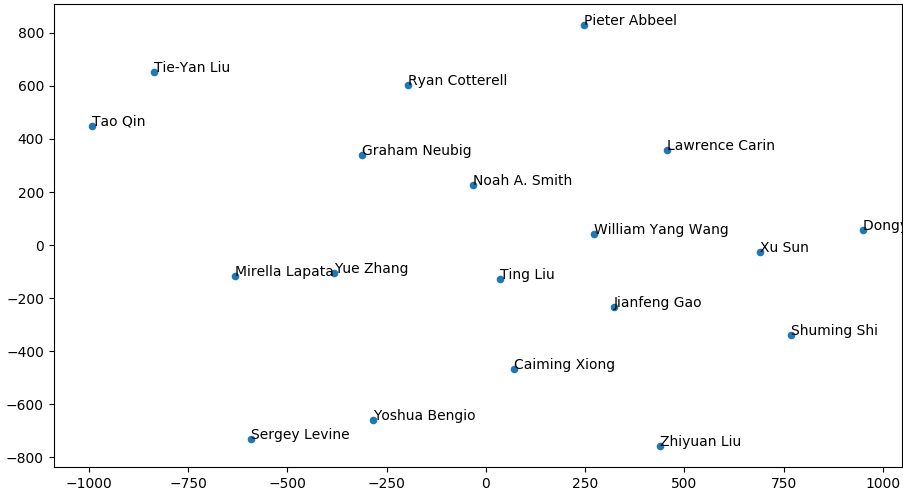

Looking at the whole period of 2012-2021, Sergey Levine (Berkeley, Google) is again at the top position. Having ranked 6th last year, he had a really prolific year and has overtaken everyone else. Yoshua Bengio (Montreal), Graham Neubig (CMU), Yue Zhang (Westlake), Ming Zhou (Sinovation, Microsoft) and Ting Liu (Harbin) make up the rest of the overall top publishers.

Note that a couple of names have been manually removed from the list. While trying to identify their affiliation, I found that publications from multiple researchers with identical names have been aggregated. Unfortunately I don't have the necessary technology at the moment to separate the publications in such cases.

The breakdown over the years gives an overview of when each researcher has published most. Sergey Levine has set the new overall record, by quite a margin. Mohit Bansal also increased his paper output by quite a lot, releasing 31 publications in 2021, to the same number as Graham Neubig. Yoshua Bengio had a decrease in the number of papers in 2020, but that count is now back up again.

First Authors

The researchers with the largest numbers of papers are generally supervisors for many post-docs and students working in their group. In contrast, first authors are usually those who do the practical work, so it's good to analyse their counts separately.

Ramit Sawhney (Tower Research Capital, IIIT Delhi) published a very impressive 9 papers in 2021. Jason Wei (Google Research) and Tiago Pimentel (Cambridge) also stand out with 6 publications.

Looking at the year range 2012-2021, Ivan Vulić (Cambridge, PolyAI) and Zeyuan Allen-Zhu (Microsoft) have both managed to publish an imprissive 24 papers as first authors. Yi Tay (Google) and Jiwei Li (Shannon.AI, Zhejiang, Stanford) are ranked next, with 23 and 22 papers, respectively. Ilias Diakonikolas (UW Madison) has an impressive 15 first-author NeurIPS papers. Haris Aziz (UNSW Sydney) has published exclusively in AAAI.

Countries

Looking at the 2021 publication counts by country highlights how much the United States publishes. China and the UK are also among the top 3. NeurIPS has the largest proportion for the US and the UK, while AAAI is the preferred venue for China.

Nearly all the top countries have continued to increase their publication counts, setting new individual records in 2021. For the US, this increase is the largest, further widening the lead.

USA

As the US publishes so much, their graph for 2021 looks very similar to the overall graph. Google, Microsoft and CMU are again at the top of the publishing counts.

China

In China, Tsinghua, CAS and Peking published the most in 2021.

UK

DeepMind, Oxford and Cambridge are towering above the rest in the UK.

Canada

Toronto really stands out in Canada, with the Vector Institute, McGill and Montreal also being well-represented.

Germany

In Germany, Tübingen and Robert Bosch published more than 40 papers.

South Korea

In South Korea, KAIST has an impressive lead over the other organizations. Seoul and Samsung also stand out.

Topic Similarity

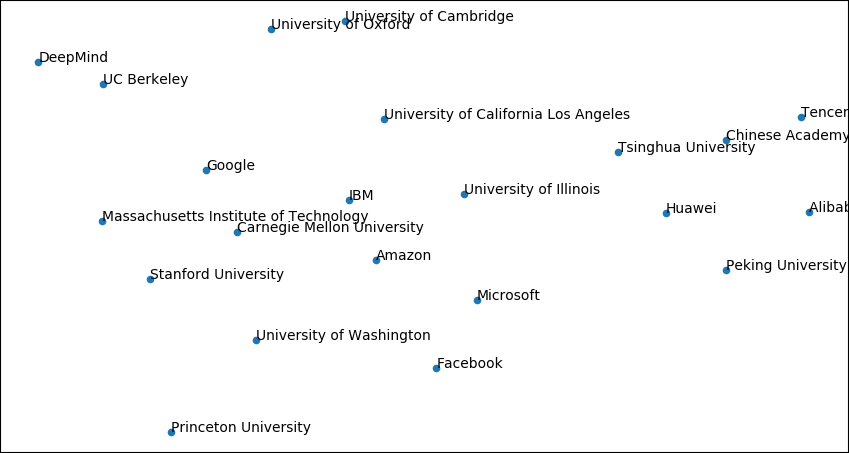

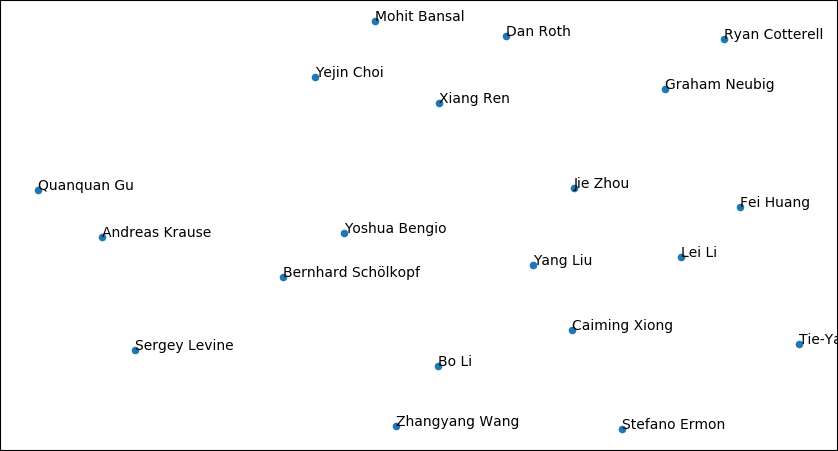

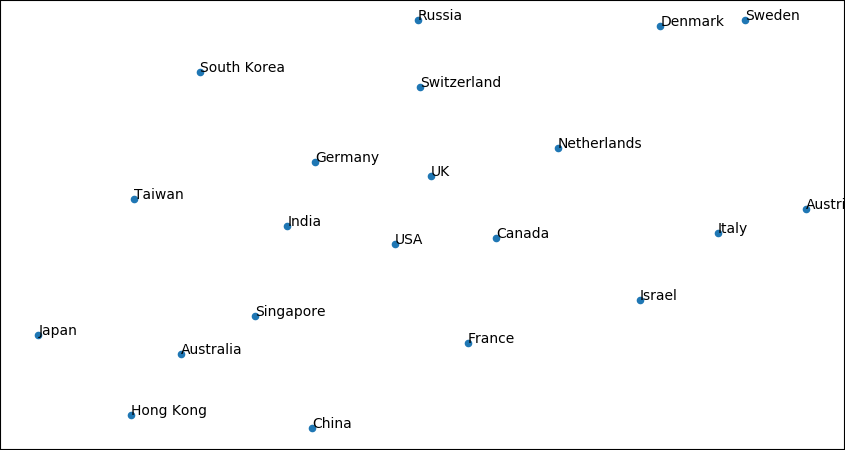

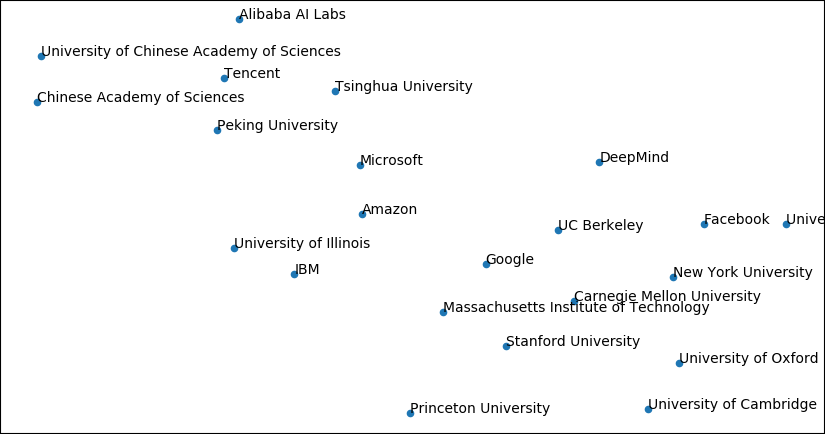

For this section, I ran the papers through LDA and then visualised them using t-SNE.

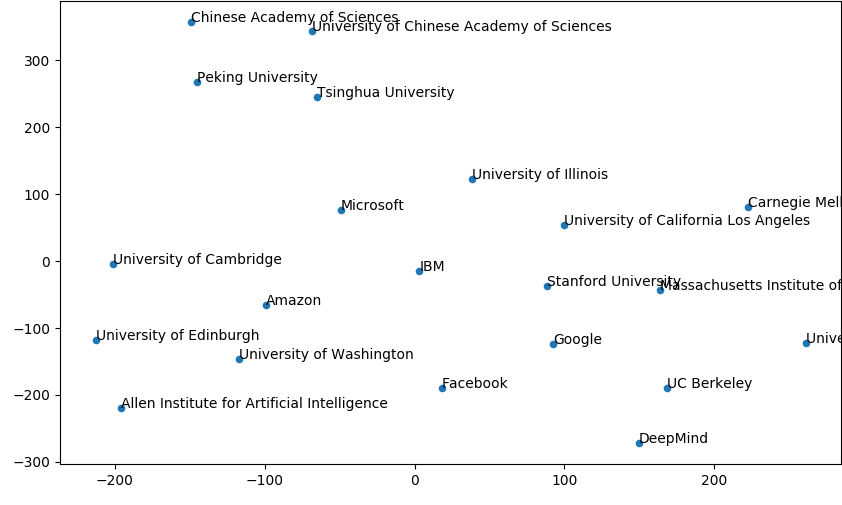

Visualization of the organizations shows that they are mostly clustered according to geographical proximity, with companies centered in the middle.

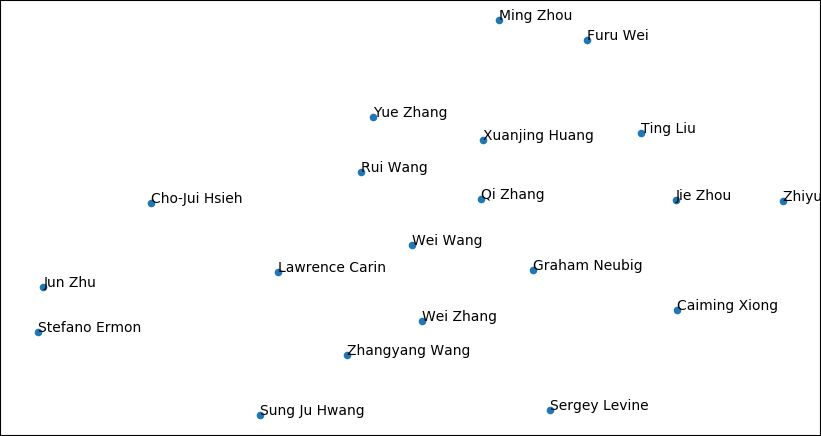

We can do the same visualization for the authors, although these clusters are a bit more difficult to interpret.

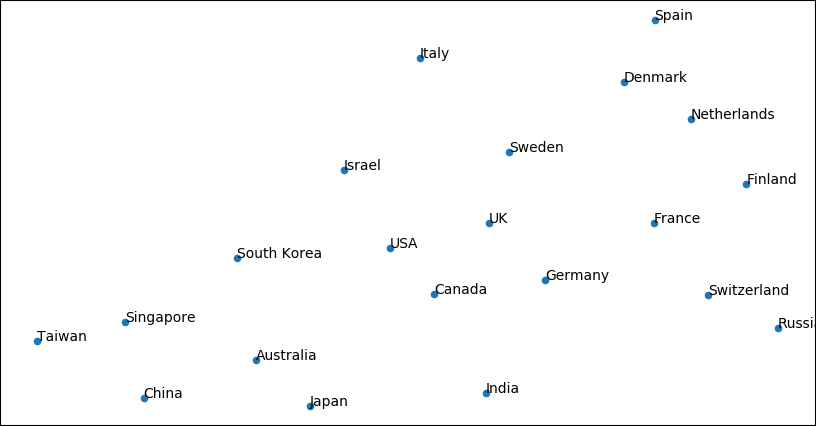

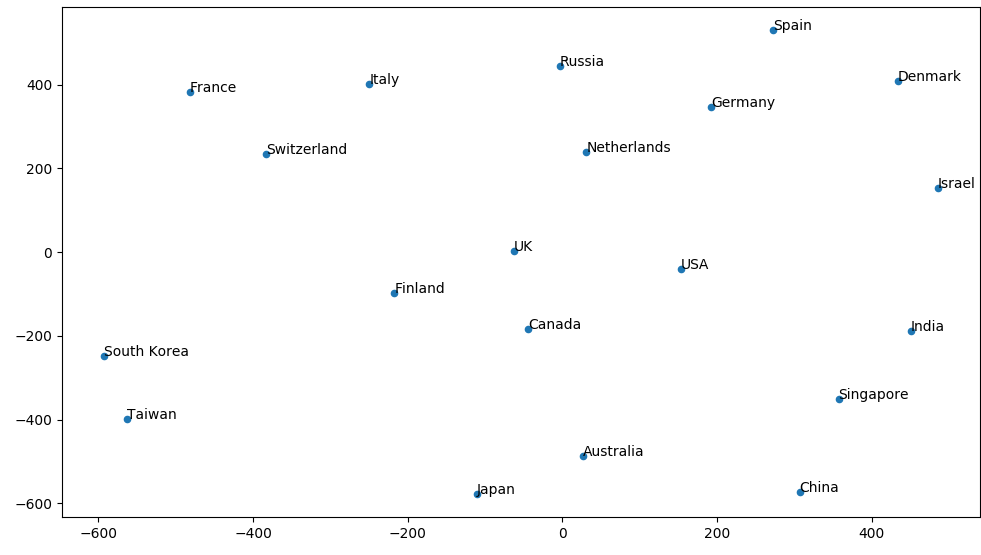

The countries are again clustered based on geography, this time with USA in the middle of the graph.

Keywords

We can also plot the proportion of papers that contain a particular keyword and track how this has changed over time.

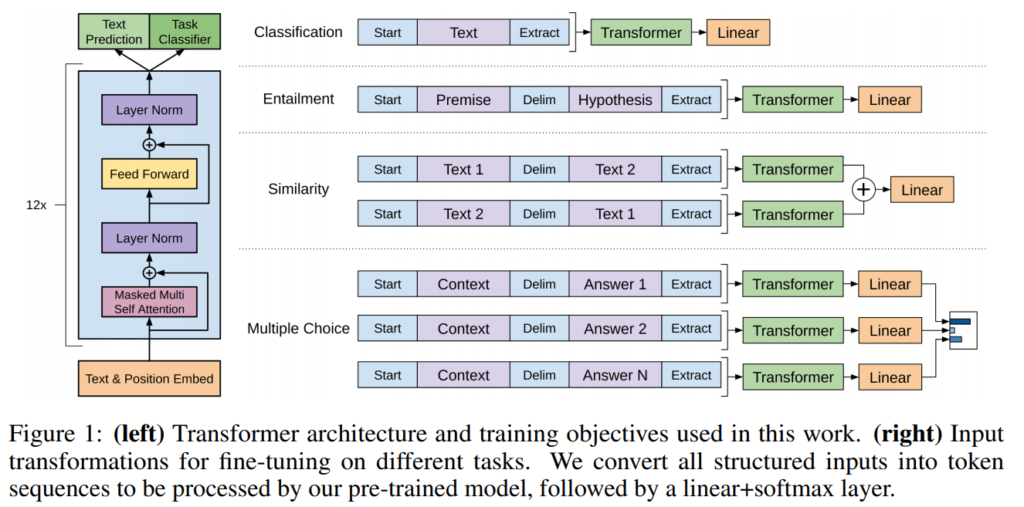

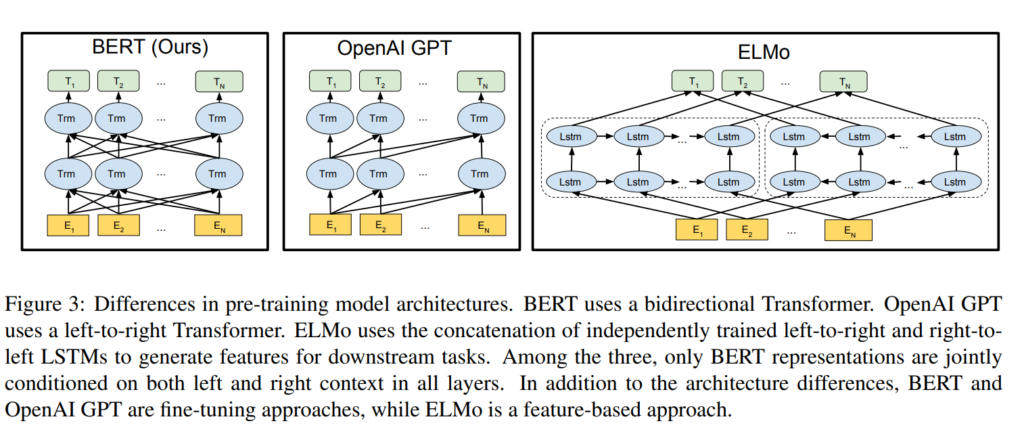

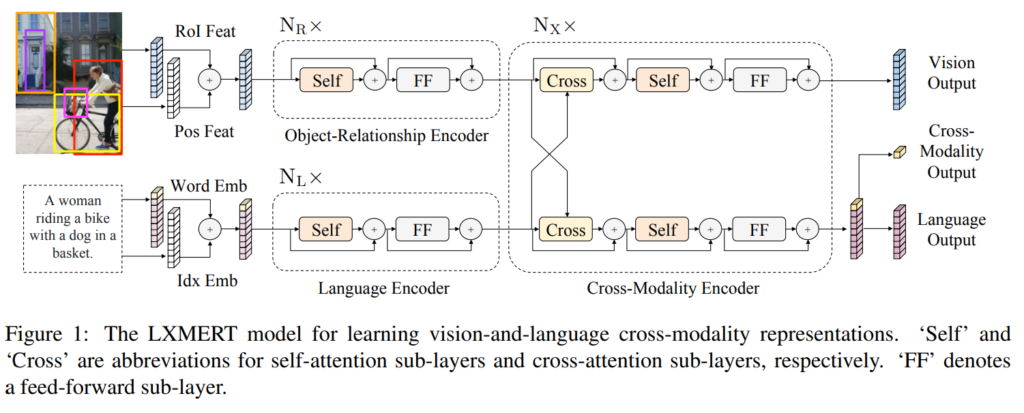

The word "neural" seems to have a very slight downward trend, although it can still be found in more than 80% of the papers. The proportion of words "recurrent" and "convolutional" is also decreasing, whereas "transformer" can now be found in more than 30% of the papers.

Looking at just the keyword "adversarial", we see that it is particularly popular in ICLR, with almost half of the papers using it. The count for "adversarial" seems to have peaked for ICML and NeurIPS, while it has been steadily increasing for AAAI.

The keyword "transformer" has gotten very popular in the past couple of years. It is particularly widely used in NLP papers, with over 50% of the publications containing it, but the popularity is steadily increasing also in all the ML conferences.

Fun Facts

And a couple more random facts about 2021 to finish:

- Most authors on a single paper: MasakhaNER: Named Entity Recognition for African Languages in TACL with 61 authors. This doesn't quite take the all-time record but gets an honorable second position.

- The longest paper title: A theory of high dimensional regression with arbitrary correlations between input features and target functions: sample complexity, multiple descent curves and a hierarchy of phase transitions at ICML. This title actually takes the all-time record as well.

- The shortest paper title: Light RUMs at ICML.